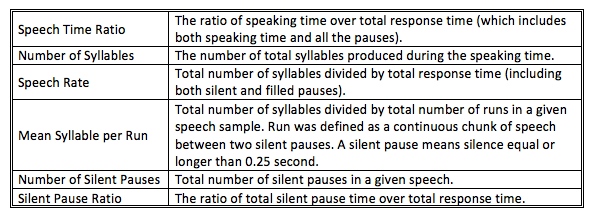

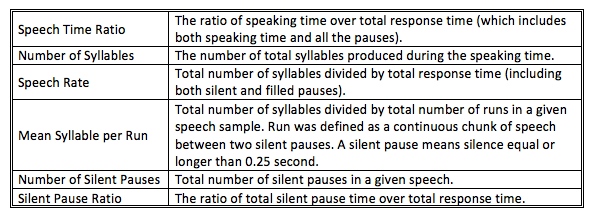

Table 1. Fluency measures significantly predictive of subjective quality measures

Mengxi Lin – lin211@purdue.edu

Alexander L. Francis – francisa@purdue.edu

Purdue University

Heavilon Hall, 500 Oval Dr.

West Lafayette, IN 47907

Popular version of paper 2aSC21

Presented Tuesday morning, May 6, 2014

167th ASA Meeting, Providence

---------------------------------

The way English is used as a lingua franca in today’s world means that English native speakers will often need to communicate with people who speak English as a second language. While the speech of some non-native English speakers seems to be easily understood by native listeners despite the presence of a foreign accent, other non-native speech seems to be more demanding, such that listeners must expend considerable effort in order to understand it. One reason for this increased difficulty may simply be the speaker’s pronunciation accuracy.

If a non-native speaker’s pronunciations of English sounds differ sufficiently from the sounds that native listeners expect, these differences may force native listeners to work much harder to understand the divergent speech patterns. However, non-native speakers also tend to differ from native ones in terms of fluency — the degree to which a speaker is able to produce appropriately structured phrases without unnecessary pauses, self-corrections or restarts.

Previous studies have shown that measures of fluency are strongly predictive of listeners’ subjective ratings of the acceptability of non-native speech: Less fluent speech is consistently considered less acceptable (Ginther, Dimova, & Yang, 2010; Xi & Mullaun, 2006). However, since less fluent speakers tend also to have less accurate pronunciations, it is unclear whether or how these factors might interact to influence the amount of effort listeners exert to understand non-native speech, nor is it clear how listening effort might relate to perceived quality or acceptability of speech.

To investigate this question, the speech of 20 non-native speakers of English varying in proficiency (high and low) and native language (Chinese and Korean) was evaluated. These speech samples were drawn from the database of the Oral English Proficiency Test (OEPT, 2014) of Purdue University, a test that is designed to determine the qualification of international graduate students to serve as teaching assistants. Ten speech samples were randomly selected from each of the low and high proficiency levels, respectively.

Two groups of native listeners of American English were recruited. The first group of 20 listeners measured subjective speech quality (listening effort, acceptability and intelligibility) of each of the 20 speech samples on a scale of 1-20. The other group of 10 listeners completed a word recognition task to assess objective intelligibility. In this task, participants listened to words extracted from the 20 non-native speech samples and wrote as accurately as possible the words they heard. The percentage of words correctly recognized for each speaker was computed as that speaker’s word intelligibility score. The subjective measures of speech quality were compared to word intelligibility, and also to acoustic measures of fluency (e.g. speech rate, pause duration, etc.) and of sound properties (e.g. certain features of consonant and vowels) related to pronunciation accuracy.

Results showed that subjective quality measures of listening effort, intelligibility and acceptability were highly correlated to one another and to a lesser extent, to word recognition, and were most strongly predicted by acoustic measures of fluency (listed in Table 1). In contrast, acoustic measures related to pronunciation accuracy did not predict either word recognition or subjective speech quality ratings. These results suggest that factors related to speech fluency, and not those related to pronunciation accuracy, have the greatest effect on native listeners’ subjective evaluations of acceptability, intelligibility and listening effort.

Table 1. Fluency measures significantly predictive of subjective quality measures

Furthermore, statistical analyses revealed no difference in pronunciation accuracy between low- and high-proficiency non-native speakers. However, the two groups did differ significantly in terms of fluency measures. Given that both groups had clearly identifiable non-native accents in their pronunciations, these results suggest that achieving proficiency may depend more on developing fluent speech patterns and less on attaining pronunciations that are perceived as native-like to native speakers. Although acquiring more native-like pronunciations may be desirable, the present study suggests that it is fluency, not pronunciation, that best differentiates low- from high-proficiency non-native speakers. Speakers with better fluency also received higher subjective ratings of intelligibility as well as acceptability, and lower ratings of listening effort, suggesting that native listeners are better able to cope with divergent pronunciations if they appear within otherwise fluent speech.

Why, and through what mechanism, then, does fluency affect the intelligibility and acceptability of non-native speech? One possibility is that greater fluency contributes to intelligibility by making listening less effortful. Because listeners are not working so hard to keep track of what the speaker is trying to say, they can devote more effort to figuring out what sounds the speaker was intending to produce. According to this argument, less fluent speech is more difficult to understand because listeners must remember what was said earlier in the sentence for a longer period of time since such speech is slower, has more and longer pauses, and often has pauses in unexpected places. If this is the case, it is possible that improving fluency may in and of itself reduce listening effort and improve the intelligibility and acceptability of non-native speech. We are currently implementing another experiment to test this hypothesis.

In conclusion, results of the present study suggest that the importance of speech fluency may outweigh pronunciation accuracy in affecting the intelligibility and acceptability of non-native speech. This finding has a direct impact on second language instruction and assessment, suggesting that it may be more efficient to start by working with learners to improve speaking fluency, rather than focusing scarce teaching resources on immediately trying to improve the accuracy with which specific speech sounds are produced.

References:

Ginther, A., Dimova, S., & Yang, R. (2010). Conceptual and empirical relationships between temporal measurements of fluency. Language Testing, 27, 379-399.

OEPP. (2014). The Oral English Proficiency Program. http://www.purdue.edu/oepp/.

Xi, X., & Mollaun, P. (2006). Investigating the utility of analytic scoring for the TOEFL academic speaking test (TAST). TOEFLiBT-01.