Donal G. Sinex - sinex@asu.edu

Department of Speech and Hearing Science

Arizona State University

Box 870102

Tempe, AZ 85287-0102

Popular version of paper 4pPP4

Presented Thursday Afternoon, May 27, 2004

147th ASA Meeting, New York, NY

Humans exhibit a remarkable ability to separate sounds produced by multiple sources that overlap in frequency and in time. Even using only one ear, we can understand one person's speech even though others are talking at the same time, or hear different instruments in an orchestra. Psychophysical experiments have identified some of the acoustic cues that make this possible, but little is known about how the auditory system processes those cues. Also, it is not known why it is much more difficult for hearing-impaired listeners to process competing sounds. For that reason, it is important to identify and understand the mechanisms by which the normal auditory system separates simultaneous signals. We have found dramatic differences in the neural representation of waveforms that are perceived as two sources, compared to the representation of similar waveforms perceived as single sounds. These response differences were observed in the auditory midbrain, but not at lower levels of the auditory system, indicating they arise from specialized processing in the brainstem.

When two (or more) sounds are present at the same time, the signal that arrives at the ear includes all the components that make up the spectrum of each individual sound source. The process of separating the competing sounds is assumed to involve two basic steps. In the first step, called spectral segregation, the components in the mixture associated with each individual sound source are isolated. In the second, the components belonging to each source are grouped. Our results address the first part of the process, spectral segregation.

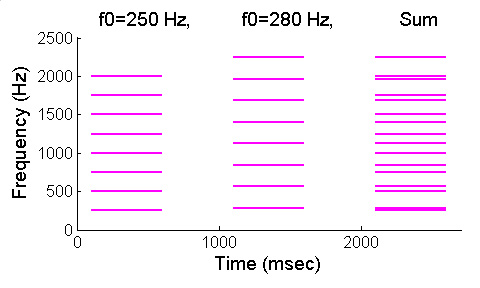

One powerful cue for spectral segregation is "harmonicity". Harmonic tones have energy only at integer multiples of a fundamental frequency. Sounds that have different fundamental frequencies (and so harmonics at different frequencies) differ in pitch and are relatively easy to segregate, even when the sounds have approximately the same overall bandwidth. Waveform 1 demonstrates segregation based on harmonicity. There are three sounds. The first is a single complex tone consisting of 8 harmonics of fundamental frequency 250 Hz. The second tone is a single complex tone consisting of 8 harmonics of fundamental frequency 280 Hz; you will hear a higher pitch. The third tone is a mixture of the first two tones. Although the spectra overlap, as shown in Figure 1, you still hear the individual complex tones with two different pitches. As described above, it is assumed that the auditory system first segregated and then grouped the components associated with each tone.

Figure 1

When one component in a harmonic complex tone is "mistuned", or shifted in frequency, the mistuned component may be perceived as a second sound. Waveform 2 illustrates this effect. There are two sounds. The first is the same as the 250-Hz harmonic tone in Waveform 1. Individual components in harmonic tones like this one cannot be distinguished from one another. In the second tone, the fourth harmonic has been mistuned by 12%, from 1000 Hz to 1120 Hz, and a second pitch is heard. The auditory system has segregated the mistuned component, and processed it as if it arose from a different sound source than the other, harmonically-related components.

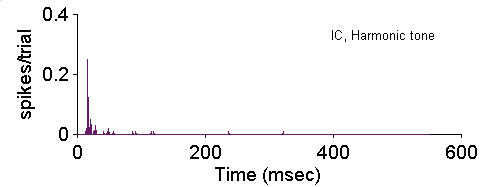

Harmonic and mistuned tones like these are useful for studying the neural mechanisms that contribute to spectral segregation. They are complex enough to be perceived as if two sound sources are present, suggesting that they engage the same neural mechanisms that lead to the perception of separate sources in more complex acoustic environments. They are also simple enough to be used in neurophysiological experiments. We recorded the responses of neurons in the inferior colliculus (IC), a mid-level auditory structure, and the auditory nerve, a low-level structure, to tones like those in the second example.Harmonic complex tones usually elicited responses from IC neurons that had no temporal pattern, or in some cases, a simple pattern that followed the envelope of the complex tone. An example of one kind of unpatterned response is shown in Figure 2; in this case, the 500-msec tone elicited a response only at the onset of the tone:

Figure 2

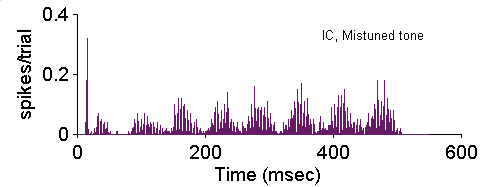

Mistuning that led to the perception of a new sound source also produced dramatic changes in the temporal discharge patterns of IC neurons. Figure 3 shows the discharge pattern of the same IC neuron to the same tone with the fourth harmonic mistuned by 12%. The temporal pattern as well as the overall magnitude of the response was strongly affected by mistuning. Responses similar to this one were observed in many IC neurons. The temporal response patterns changed systematically when the amount of mistuning was changed.

Figure 3

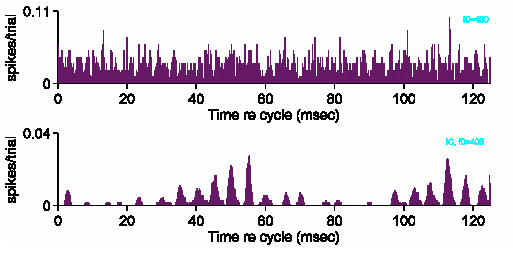

Response changes like this were not observed in the auditory nerve, indicating that neural processing in the brainstem creates the complex patterns from simpler inputs. Figure 4 compares the responses elicited by one mistuned tone from the auditory nerve (upper panel) and from a comparable IC neuron (lower panel). As in the previous example, the response of the IC neuron to the mistuned tone was distinctly patterned, and very different from the response in the auditory nerve. Auditory nerve fibers responded similarly to harmonic and mistuned tones, unlike the IC where mistuning produced large response changes.

Figure 4

These results indicate that the processing of complex tones undergoes a major transformation in the lower brainstem. The unique and distinctive response patterns associated with mistuned tones convey information to more central structures that eventually leads to the perception of two sounds, and thus are likely to contribute to perceptual segregation based on harmonicity. These results lay the groundwork for future research that could address how the information in the temporal pattern is decoded, or how the pattern might be degraded when hearing is impaired.